LLM Observability - Meaning, Tools &

Much More

Large Language Model (LLM) observability is the practice of gathering information on LLMs to understand and monitor their behavior. In simpler terms, LLM observability is like "looking inside the model's head" to know what it's doing wrong and why.

What's the Point of LLM Observability?

LLM observability is important because it helps diagnose the inconsistencies of Large Language Models. AI models behave differently from other types of digital services because we can never know for sure what they will do.

If you type "What's the best summer destination?" on Google today and tomorrow, you will probably get the same results. If you ask ChatGPT or other LLMs the same question, however, you're likely to get two different responses. That's when LLM observability comes into play.

The point of LLM observability is therefore to collect the necessary information to increase the consistency of these systems. This information should provide the insights that are necessary to conduct LLM optimization, which is a separate but complementary activity.

LLM Observability Metrics

To diagnose Large Language Models via observability, it's essential to track metrics that tell us whether the system is inconsistent. Among others, these include:

- Response quality: a general analysis of the quality of the response provided by the LLM, focusing on aspects like relevance (is the answer fitting of the question?), coherence (does the answer make sense?), and readability (was the answer provided clearly and using appropriate language?).

- Hallucinations: AI hallucinations occur when LLMs confidently invent something that's not true or makes no sense. Identifying these hallucinations is a key aspect of LLM observability.

- Harmful behavior: everything that can hurt LLM users, such as personal data leaks, biased/racist remarks, and harmful instructions, especially when related to sensitive topics.

- Performance over time: also known as "drift", this metric lets us know whether the LLM is performing better or worse over time.

- Latency: concerns how fast the LLM provides answers.

- Cost: the actual financial cost of an AI-generated response.

8 Best LLM Observability Tools

LLM observability tools are services and software designed to help users conveniently identify, track, and optimize the metrics listed above.

The following are 8 of the most complete and established LLM observability tools right now (listed in no particular order):

- Langfuse

- Lunary

- Arize AI

- Langsmith

- HoneyHive

- Weights & Biases

- Datadog

- PromptLayer

Below, we will be taking a closer look at what each of these observability tools can do.

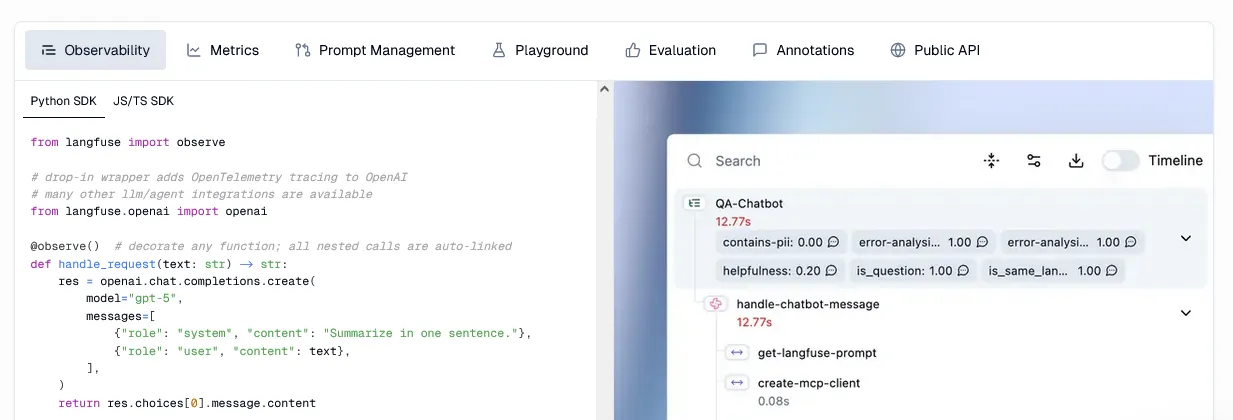

1. Langfuse

Using Langfuse is like having a button that hits "Record" on every LLM action. The program saves all conversations and automatically tracks metrics like speed, cost, and hallucination frequency. They also offer useful AI optimization resources, including A/B testing and prompt comparison.

2. Lunary

Lunary is a service that provides a complete LLM observability dashboard. Users can monitor LLM usage, collect metrics such as cost and topic instantly, and identify areas of improvement. The good news? Lunary offers a free trial for personal projects with up to 10.000 monthly events and three projects.

3. Arize AI

Arize AI delivers LLM observability via three distinctive products. Arize AX, a platform for engineering AI models "from development to production", is the biggest highlight. Additionally, you get the open-source prompt engineering suite Phoenix and the open-source instrumentation package OpenInference.

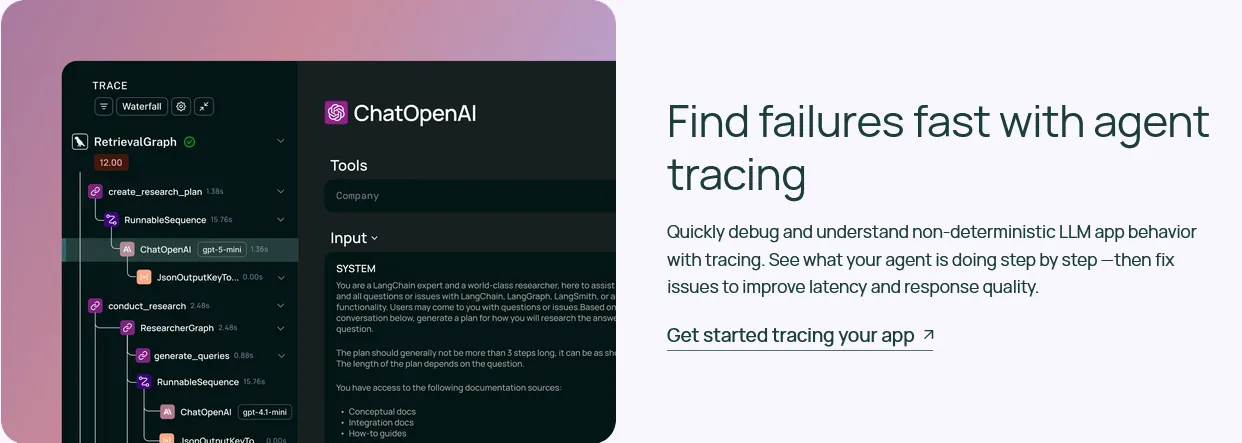

4. Langsmith

Langsmith is the LLM observability platform of LangChain, and it's an AI monitoring beast! The agent tracing tool is perfect for quickly identifying inconsistencies, the Insights tool identifies helpful user patterns, and the custom dashboard lists business-essential metrics like latency and cost.

5. HoneyHive

HoneyHive is a service that puts your AI models to the test by tracking answers and providing scores to query responses. It's also one of the best tools for comparing different models and selecting the best, an essential aspect of AI integration.

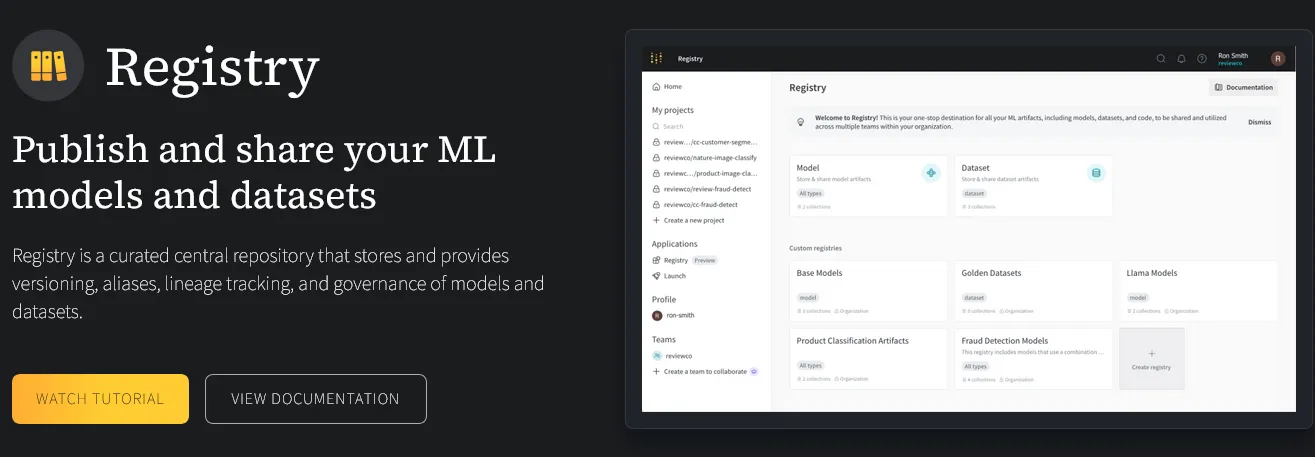

6. Weights &

Biases

Best suited for the technical user, Weights & Biases is an LLM observability tool designed for teams and experts training their own models. The Weights & Biases platform includes experiments, sweeps (i.e., hyperparameter optimization), data tables, and dedicated reports.

7. Datadog

Datadog is a company offering diverse digital monitoring services, including comprehensive OpenAI monitoring. While some LLM observability tools can be quite complex to navigate, Datadog compiles all relevant data in a simple-to-use dashboard with all essential info: number of requests, tokens per request, response time, estimated cost, and more.

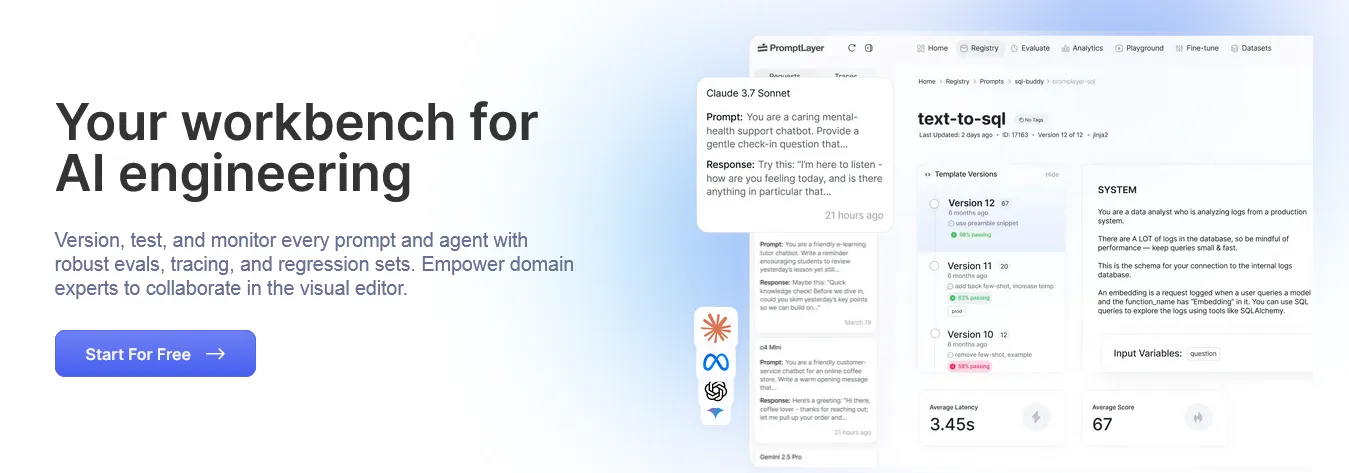

8. PromptLayer

As the name implies, PromptLayer is, first and foremost, a prompt management tool. With A/B tests and side-by-side usage/latency comparisons, PromptLayer also offers services such as universal model tracking, metadata analytics, real-time auditing, and model fine-tuning.

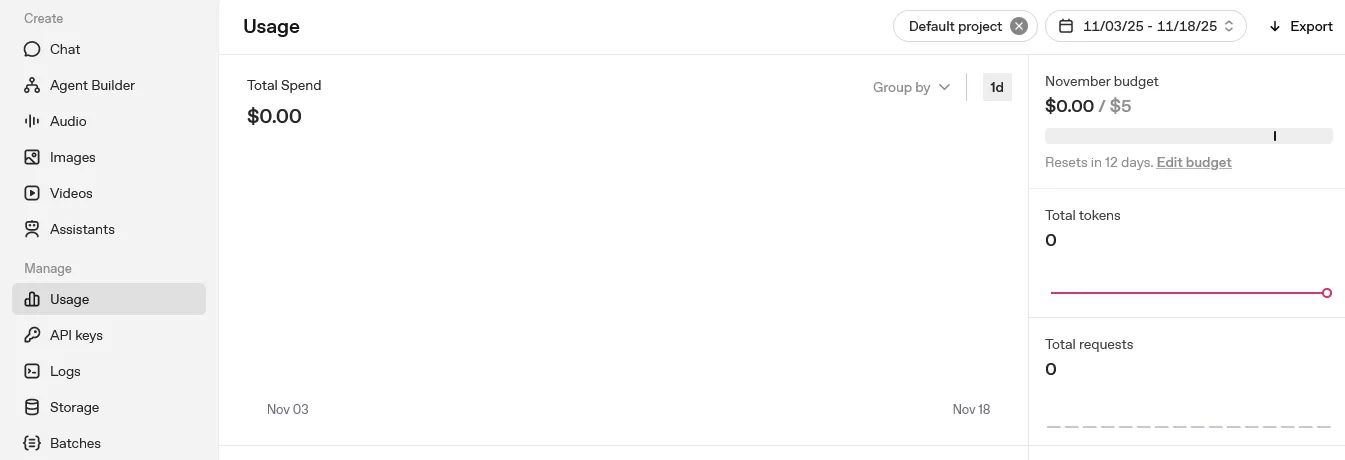

Extra:

OpenAI Dashboard

For an extra, why not try OpenAI Dashboard, ChatGPT's built-in observability tool? Despite not covering other models (only ChatGPT), this handy LLM observability resource is the perfect solution for casual AI users looking for free insights into their OpenAI usage.

What LLM Observability Tools Actually Do

The services provided by LLM observability tools vary extensively from service to service, but they usually encompass three fundamental resources:

- Logging: this is the tool's ability to save data. Data includes the model used, the prompt, the model's response to the prompt, the response delivery time, related user feedback, and so forth.

- Analyzing: based on logged data, the tool displays average quality and ranks responses according to speed or cost. The tool can also list detected hallucinations, track harmful behavior, and more.

- Testing: the tool can offer features for testing prompts and other aspects of LLM usage to determine which solutions are the most effective.

Additional resources provided by LLM observability tools include prompt versioning and automatic alerts for quality, hallucinations, cost increase, and so forth.

LLM Observability:

Advantages & Challenges

LLM observability can be extremely advantageous for businesses and freelancers, but it also poses hard challenges that need to be considered.

Let's start by looking at the main benefits of LLM observability:

- Users can understand better how the LLM works.

- They can quickly identify errors (like hallucinations) and take the necessary measures to correct them.

- They can increase overall LLM safety by detecting harmful behaviors.

- They can reduce costs.

As for the challenges of LLM observability, these include:

- Since LLMs deal with mountains of data, it can be hard to store and analyze, even with the help of an LLM observability tool.

- AI models change rapidly, meaning prompts that worked perfectly yesterday can not be as efficient today. This forces freelancers and teams to constantly monitor results; it's a never-ending task.

- Reviewing response quality is a fairly subjective task, as you need to evaluate subjective aspects like "creativity". This means that LLM observability isn't always as exact as intended.

Another less-discussed challenge of LLM observability is balancing technical and human aspects. This challenge is highlighted by a study (PDF) published by the European Journal of Computer Science and Information Technology. Research found that around 65% of production failures arise from the inability to correlate technical LLM enhancements with the actual needs of the humans using these models.

Does LLM Observability Work?

LLM observability is a fairly new "art form", but we already have evidence that it works. A Future AGI case study involving an AI customer support bot determined that, through observability tactics, the company "saw a 60% reduction in factual inaccuracies reported by users".

This reduction in factual inaccuracies had a real impact on the company's operations, leading to a "40% decrease in escalations to human support agents". This means human customer support agents had their workload reduced by almost half!

The study also shows improvements in other areas, including:

- A 30% reduction in response time (from 7 seconds to 4.9 seconds);

- A 22% reduction in costs, even with a 15% increase in chatbot usage;

- Improved customer satisfaction (approval raised from 3.2 to 4.1/5 in just six months).

Even though we need more data and studies to determine how effective LLM observability can be, this study is enough to show that LLM observability has measurable success, utterly improving a company's operations and reducing costs.

Is LLM Observability Enough?

LLM observability is key to understanding how Large Language Models work; however, it's just the first step of your LLM performance improvement journey.

Observability tactics must be used in conjunction with LLM optimization strategies to deliver results. After all, observability is what you see, while optimization is what you do. To act, we first need to observe and track behavior accurately, but it's only through practices like model matching, prompt engineering, and fine-tuning that we can truly make the most of the AI models we use.